Vidhi Jainemail: {first name}{last name} at cmu dot edu Hi, I am a PhD student at the Robotics Institute (RI), part of the School of Computer Science at Carnegie Mellon University (CMU), where I am advised by Yonatan Bisk. Previously, I have worked at Google DeepMind Robotics, Meta AI and Microsoft Research India. I am interested in learning algorithms for interactive and adaptable embodied AI. My long term vision is to develop robots that can perform multiple tasks around the home and learn new skills from their users. My research focuses at the intersection of language, vision and actions to enhance real-time perception, motion control and dialog in robots. |

|

News |

Show more ...

Research |

|

Vid2Robot: End-to-end Video-conditioned Policy Learning with Cross-Attention TransformersVidhi Jain, Maria Attarian, Nikhil J Joshi Ayzaan Wahid, Danny Driess, Quan Vuong, Pannag R Sanketi, Pierre Sermanet, Stefan Welker, Christine Chan, Igor Gilitschenski, Yonatan Bisk, Debidatta Dwibedi. Preprint 2024. webpage | arXiv | BibTeX |

|

Towards General-Purpose Robots via Foundation Models: A Survey and Meta-AnalysisYafei Hu*, Quanting Xie*, Vidhi Jain*, Jonathan Francis, Jay Patrikar, Nikhil Keetha, Seungchan Kim, Yaqi Xie, Tianyi Zhang, Shibo Zhao, Yu Quan Chong, Chen Wang, Katia Sycara, Matthew Johnson-Roberson, Dhruv Batra, Xiaolong Wang, Sebastian Scherer, Zsolt Kira, Fei Xia, Yonatan Bisk. Preprint 2024. webpage | arXiv | BibTeX |

|

FlexCap: Generating Rich, Localized, and Flexible Captions in ImagesDebidatta Dwibedi, Vidhi Jain, Jonathan Tompson, Andrew Zisserman, Yusuf Aytar. Preprint 2024. webpage | arXiv | BibTeX |

|

How to Prompt Your Robot: A PromptBook for Manipulation Skills with Code as PoliciesMontserrat Gonzalez Arenas, Ted Xiao, Sumeet Singh, Vidhi Jain, Allen Z. Ren, Quan Vuong, Jacob Varley, Alexander Herzog, Isabel Leal, Sean Kirmani, Mario Prats, Dorsa Sadigh, Vikas Sindhwani, Kanishka Rao, Jacky Liang, Andy Zeng. Preprint 2023. arXiv | BibTeX |

|

Open X-Embodiment: Robotic Learning Datasets and RT-X Models ArXiv (ArXiv)Open X-Embodiment Collaboration, Abhishek Padalkar, Acorn Pooley, Ajinkya Jain, Alex Bewley, Alex Herzog, Alex Irpan, Alexander Khazatsky, Anant Rai, Anikait Singh, Anthony Brohan, Antonin Raffin, Ayzaan Wahid, Ben Burgess-Limerick, Beomjoon Kim, Bernhard Schölkopf, Brian Ichter, Cewu Lu, Charles Xu, Chelsea Finn, Chenfeng Xu, Cheng Chi, Chenguang Huang, Christine Chan, Chuer Pan, Chuyuan Fu, Coline Devin, Danny Driess, Deepak Pathak, Dhruv Shah, Dieter Büchler, Dmitry Kalashnikov, Dorsa Sadigh, Edward Johns, Federico Ceola, Fei Xia, Freek Stulp, Gaoyue Zhou, Gaurav S. Sukhatme, Gautam Salhotra, Ge Yan, Giulio Schiavi, Hao Su, Hao-Shu Fang, Haochen Shi, Heni Ben Amor, Henrik I Christensen, Hiroki Furuta, Homer Walke, Hongjie Fang, Igor Mordatch, Ilija Radosavovic, Isabel Leal, Jacky Liang, Jaehyung Kim, Jan Schneider, Jasmine Hsu, Jeannette Bohg, Jeffrey Bingham, Jiajun Wu, Jialin Wu, Jianlan Luo, Jiayuan Gu, Jie Tan, Jihoon Oh, Jitendra Malik, Jonathan Tompson, Jonathan Yang, Joseph J. Lim, João Silvério, Junhyek Han, Kanishka Rao, Karl Pertsch, Karol Hausman, Keegan Go, Keerthana Gopalakrishnan, Ken Goldberg, Kendra Byrne, Kenneth Oslund, Kento Kawaharazuka, Kevin Zhang, Keyvan Majd, Krishan Rana, Krishnan Srinivasan, Lawrence Yunliang Chen, Lerrel Pinto, Liam Tan, Lionel Ott, Lisa Lee, Masayoshi Tomizuka, Maximilian Du, Michael Ahn, Mingtong Zhang, Mingyu Ding, Mohan Kumar Srirama, Mohit Sharma, Moo Jin Kim, Naoaki Kanazawa, Nicklas Hansen, Nicolas Heess, Nikhil J Joshi, Niko Suenderhauf, Norman Di Palo, Nur Muhammad Mahi Shafiullah, Oier Mees, Oliver Kroemer, Pannag R Sanketi, Paul Wohlhart, Peng Xu, Pierre Sermanet, Priya Sundaresan, Quan Vuong, Rafael Rafailov, Ran Tian, Ria Doshi, Roberto Martín-Martín, Russell Mendonca, Rutav Shah, Ryan Hoque, Ryan Julian, Samuel Bustamante, Sean Kirmani, Sergey Levine, Sherry Moore, Shikhar Bahl, Shivin Dass, Shuran Song, Sichun Xu, Siddhant Haldar, Simeon Adebola, Simon Guist, Soroush Nasiriany, Stefan Schaal, Stefan Welker, Stephen Tian, Sudeep Dasari, Suneel Belkhale, Takayuki Osa, Tatsuya Harada, Tatsuya Matsushima, Ted Xiao, Tianhe Yu, Tianli Ding, Todor Davchev, Tony Z. Zhao, Travis Armstrong, Trevor Darrell, Vidhi Jain, Vincent Vanhoucke, Wei Zhan, Wenxuan Zhou, Wolfram Burgard, Xi Chen, Xiaolong Wang, Xinghao Zhu, Xuanlin Li, Yao Lu, Yevgen Chebotar, Yifan Zhou, Yifeng Zhu, Ying Xu, Yixuan Wang, Yonatan Bisk, Yoonyoung Cho, Youngwoon Lee, Yuchen Cui, Yueh-hua Wu, Yujin Tang, Yuke Zhu, Yunzhu Li, Yusuke Iwasawa, Yutaka Matsuo, Zhuo Xu, Zichen Jeff Cui. Preprint 2023. webpage | arXiv | code | BibTeX |

|

Spatial Language Attention Policies for Efficient Robot LearningPriyam Parasher, Vidhi Jain, Xiaohan Zhang, Jay Vakil, Sam Powers, Yonatan Bisk and Chris Paxton. 7th Annual Conference on Robot Learning (CoRL) 2023. webpage | arXiv | code | reviews | BibTeX |

|

HomeRobot: Open-Vocabulary Mobile ManipulationSriram Yenamandra, Arun Ramachandran, Karmesh Yadav, Austin S Wang, Mukul Khanna, Theophile Gervet, Tsung-Yen Yang, Vidhi Jain, Alexander Clegg, John M Turner, Zsolt Kira, Manolis Savva, Angel X Chang, Devendra Singh Chaplot, Dhruv Batra, Roozbeh Mottaghi, Yonatan Bisk, Chris Paxton. 7th Annual Conference on Robot Learning (CoRL) 2023. webpage | arXiv | code | reviews | BibTeX |

|

Transformers are Adaptable Task PlannersVidhi Jain, Yixin Lin, Eric Undersander, Yonatan Bisk and Akshara Rai. 6th Annual Conference on Robot Learning (CoRL) 2022. webpage | arXiv | video | code | reviews | BibTeX |

|

MAEA: Multimodal Attribution in Embodied AIVidhi Jain, Jayant Sravan Tamarapalli, Sahiti Yerramilli, and Yonatan Bisk. NeurIPS Workshop on Trustworthy Embodied AI 2022. webpage | arXiv | video | reviews | BibTeX |

|

Towards Explainable Embodied AIVidhi Jain Masters thesis 2021. pdf | BibTeX |

|

Learning to capture spatial semantic priors for indoor navigationVidhi Jain, Shishir Patil, Prakhar Agarwal and Katia Sycara. NeurIPS Object Representations for Learning and Reasoning (ORLR) 2020. pdf | webpage | arXiv | video | code | BibTeX |

|

Predicting strategies in simulated search and rescue tasksVidhi Jain, Rohit Jena, Huao Li, Tejus Gupta, Dana Hughes, Michael Lewis and Katia Sycara. NeurIPS AI for Humanitarian Assistance and Disaster Response (AIADR) 2020. arXiv | video | slides | BibTeX |

|

Learning to navigate in unseen cluttered environmentsVidhi Jain, Ganesh Iyer and Katia Sycara. NeurIPS Women in Machine Learning workshop (WiML) 2020. pdf | poster | BibTeX |

|

Coping with sample inefficiency in deep reinforcement learningVidhi Jain, Simin Liu, and Ganesh Iyer. ICML Women in Machine Learning Un-Workshop (WiML) 2020. pdf | slides | BibTeX |

|

Investigating the viability of Generative Models for Novelty DetectionVidhi Jain Bachelors thesis 2018. pdf | BibTeX |

|

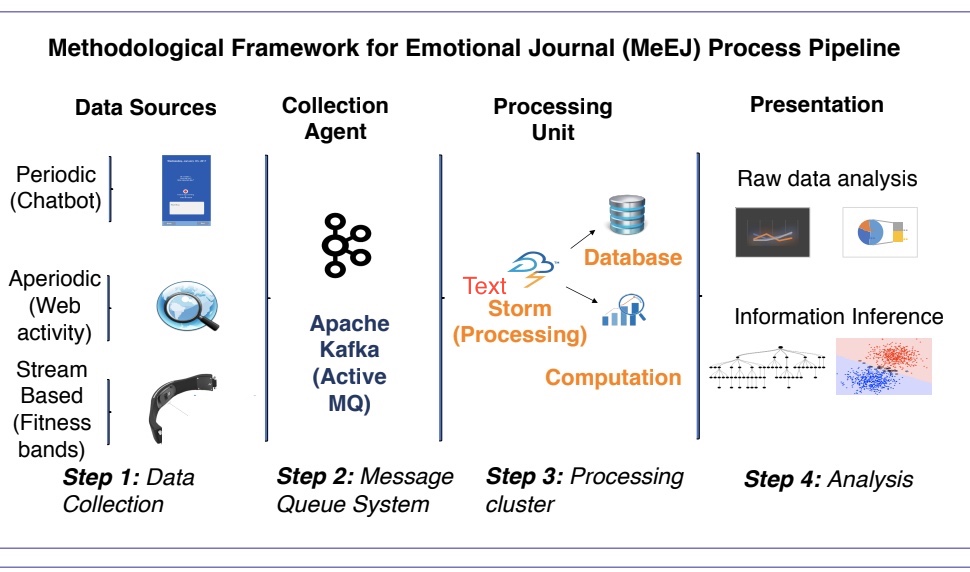

Symptomatic Diagnosis and Prognosis of Psychiatric Disorders through Personal GadgetsVidhi Jain, Simin Liu, and Ganesh Iyer. ACM CHI Extended Abstracts (CHI EA '17) 2017. pdf | webpage | slides | poster | BibTeX |

|

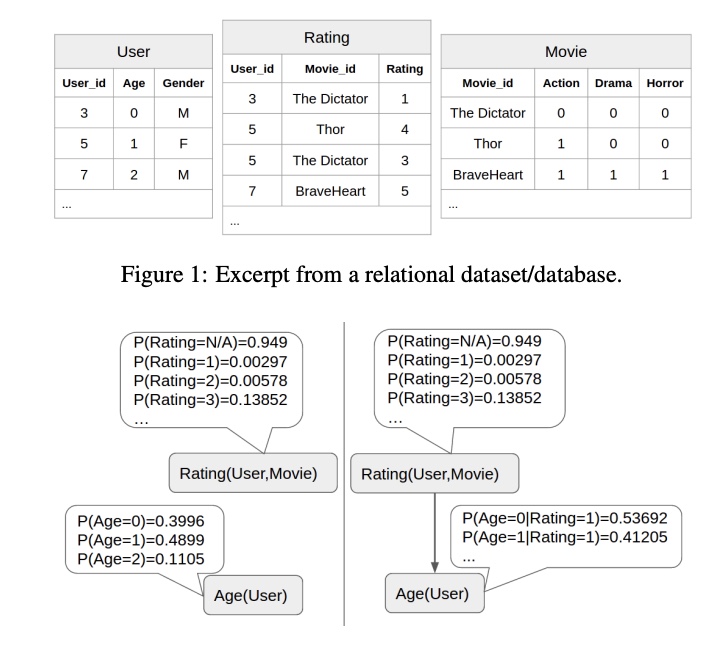

Model Selection Scores for Multi-Relational Bayesian NetworksSajjad Gholami, Oliver Schulte, Vidhi Jain, Qiang Zhao. IJCAI Declarative Learning Based Programming (DeLBP) 2017. pdf | code | BibTeX |

|

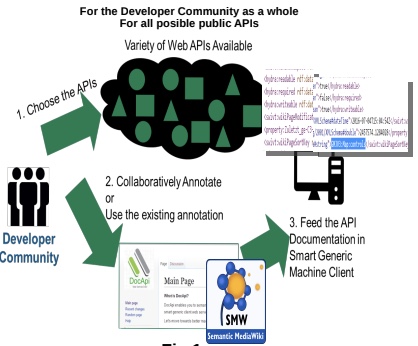

Empowering API Consumer Community: Collaborative Annotation of Web API Documentation for Semantically Structured FormatVidhi Jain and Matthias Frank Grace Hopper Conference India (GHCI) 2016. pdf | poster | |

Talks |

Education |

|

Design and source code from Leonid Keselman's Jekyll fork and Jon Barron's website |